Discover what the highest rated Respiratory CRO has to offer.

Sep 4, 2025 11:00 AM EDT

Discover what the highest rated Respiratory CRO has to offer.

Discover what the highest rated Dermatology CRO has to offer.

Sep 4, 2025 11:00 AM EDT

Discover what the highest rated Dermatology CRO has to offer.

Discover what the highest rated Psychiatry CRO has to offer.

Sep 4, 2025 11:00 AM EDT

Discover what the highest rated Psychiatry CRO has to offer.

Discover what the highest rated Women's Health CRO has to offer.

Sep 4, 2025 11:00 AM EDT

Discover what the highest rated Women's Health CRO has to offer.

Discover what the highest rated Medical Device CRO has to offer.

Sep 4, 2025 11:00 AM EDT

Discover what the highest rated Medical Device CRO has to offer.

Discover what the highest rated Digital Therapeutics CRO has to offer.

Sep 4, 2025 11:00 AM EDT

Discover what the highest rated Digital Therapeutics CRO has to offer.

Dr. Billings brings over 40 years of experience spanning academia, government, and industry. He has deep expertise in the diagnostics sector, having previously served as Chairman and CEO of Biological Dynamics, a molecular diagnostics company, and as Chief Medical Officer of Natera, a leading genetic testing company focused on oncology, women’s health, and organ health.

Rob is a Director of Strategic Solutions at Lindus Health, overseeing the design and execution of large-scale clinical trials with a focus on diagnostics and screening studies. He has extensive experience in healthcare product development and clinical trial strategy. With a background in behavioral and clinical science, Rob specializes in translating complex operational challenges into scalable, innovative trial designs.

FDA submissions for liquid biopsy and MCED screening trials depend on thorough cancer verification: every confirmed or suspected cancer case must have documented evidence of presence, type, and stage through diagnostic workup, medical records, and imaging confirmation. Producing that level of evidence means converting unstructured medical records collected from hundreds of healthcare facilities with different formats into structured, regulatory-grade data. At the scale these trials operate, that conversion is the central operational challenge.

"The data pipeline, from medical record retrieval to structured EDC output, has to be designed for regulatory scrutiny from the start. Retrofitting data quality into an operational model that wasn't built for it creates avoidable risk at exactly the wrong stage of a program." - Dr. Paul Billings

This is Part 3 of a three-part series on operational strategies for large-scale liquid biopsy screening trials. While site-based models remain the standard for many protocols, this series focuses on the operational challenges of decentralized and hybrid designs, where data quality requirements are the same but the infrastructure to meet them looks different. Part 1 covered enrollment models and how to select the right approach for a given protocol, including integrating virtual enrollment with existing site infrastructure. Part 2 described the infrastructure for retrieving medical records nationwide, and the triage logic for directing record pulls where they're needed. This article picks up where those records arrive:

Clinical validation studies and clinical utility studies both require meticulous source documentation, but the operational burden differs between them.

A validation study establishes test performance: sensitivity, specificity, PPV, and NPV. Every misclassified, mis-staged, or missing case flows directly into those calculations. A single undocumented cancer in a large-scale trial shifts the sensitivity denominator, and when you're demonstrating detection across rare cancer types, even small errors can fall outside prespecified confidence intervals. The quality challenge is concentrated at the point of diagnosis.

A utility study carries an additional burden: demonstrating that early detection changes outcomes through stage shifts, earlier treatment, or survival benefit. The same rigor has to extend across years of treatment documentation and follow-up. The abstraction pipeline has to maintain the same accuracy at year four that it had at year one. The solution described below serves both.

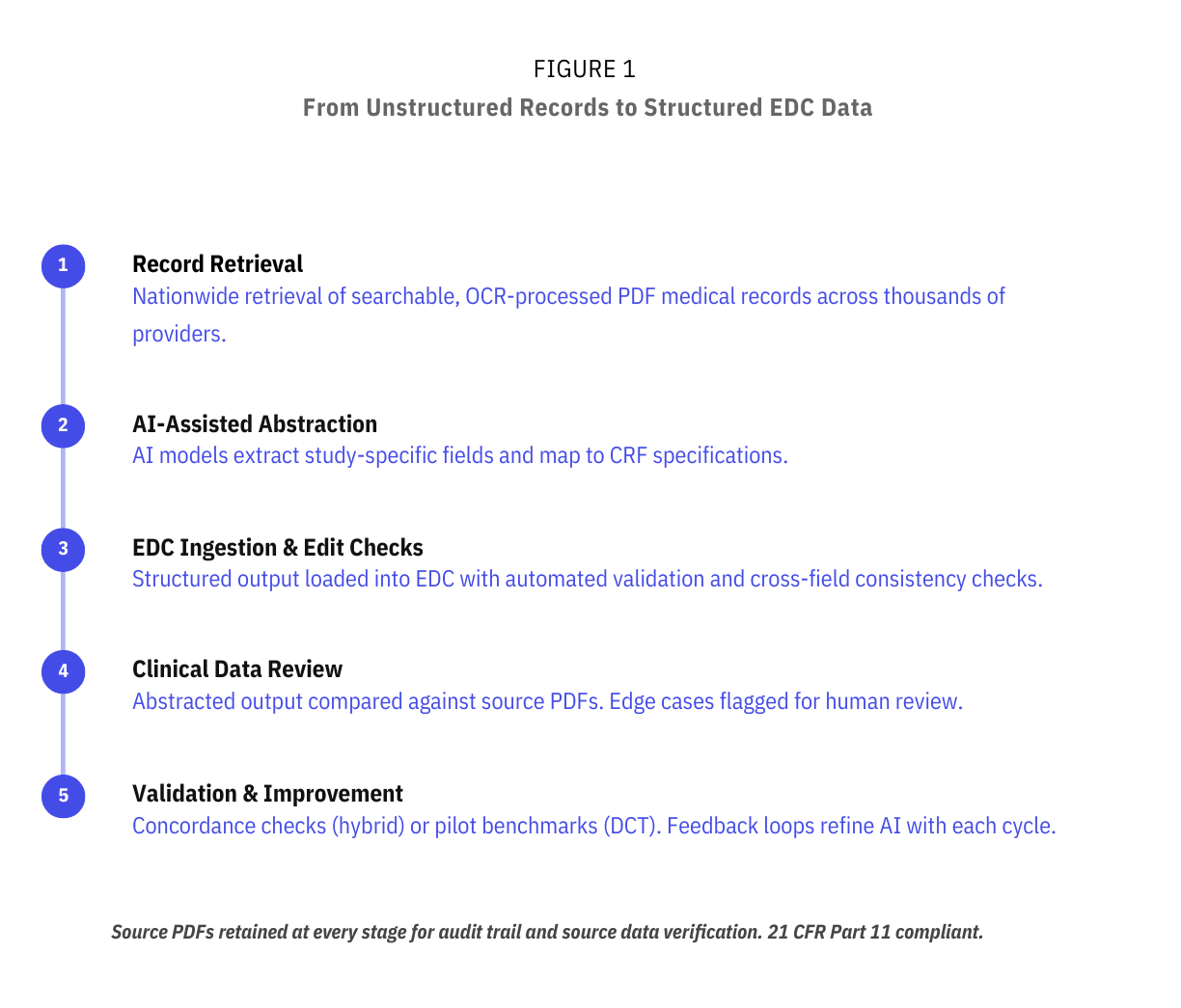

Medical records typically arrive as searchable, OCR-processed PDFs covering encounters, procedures, labs, imaging reports, pathology results, diagnoses, and treatments. At trial volumes of tens of thousands of participants, manual abstraction is usually not commercially viable. Therefore, it is desirable for the pipeline to be automated, whilst maintaining high accuracy for the endpoints described above. Figure 1 outlines this end-to-end pipeline.

As mentioned in Part 2, AI models process these PDFs to extract study-specific fields and map them to CRF specifications: prior screening history, comorbidities, cancer history, key dates, and staging. Structured output is loaded into the study EDC with automated edit checks, including range and format validation and cross-field consistency checks against CRF definitions. This pipeline is designed to deliver structured clinical outcomes into the EDC within days of record receipt, compared to the weeks typical of manual workflows.

Our experience on active diagnostic trials has shown that direct data mining of full PDF medical records often yields richer, more complete data than templated site EHR exports. When a site exports data from an EHR warehouse, the output is limited to whatever discrete fields the system captures. But the full PDF record includes operative notes, pathology narratives, radiology impressions, and clinical notes where physicians document staging rationale, treatment decisions, and diagnostic reasoning in free text. A 2025 ASCO abstract found that an LLM-based extraction pipeline achieved an F1 score of 0.85 or above for extracting cancer diagnoses, histology, grade, and staging from unstructured EHR text and scanned documents, with performance validated across 40 cancer types using both pathology reports and clinical progress notes.

The nationwide retrieval model described in Part 2 also has a direct data quality implication. A participant who receives a cancer diagnosis at a community hospital outside the trial network will appear in the virtual arm's record pull. They may not appear in the EHR export from the enrolling site at all. For a study measuring sensitivity and PPV, a missing case is a misclassified negative that degrades the test's apparent performance. For a utility study, missing treatment records from a secondary facility means incomplete longitudinal data. Retrieving records from multiple facilities adds per-patient cost. But missing a cancer case because records sat in a community hospital outside the trial network is a far more expensive problem at submission.

Accurate staging deserves particular attention because it sits at the intersection of the hardest extraction problem. While comprehensive staging is not always strictly mandated, it significantly strengthens regulatory submissions and supports label claims. Demonstrating test sensitivity across stages I through IV, and particularly showing early-stage detection, is central to the value proposition of MCED screening. Incomplete or inaccurate staging makes stage-specific performance claims unreliable, which narrows the label you can defend.

Published research in Frontiers in Digital Health found that staging information is missing from 10 to 50% of patient records in cancer registries, largely because staging data is scattered across unstructured clinical text rather than structured EHR fields. Insurance claims data, which captures diagnosis and procedure codes but rarely includes staging detail, is even less reliable for staging. Any data pipeline that relies on structured EHR exports or claims alone will systematically undercount staging information.

Fully decentralized and hybrid designs face different data quality risks, and the validation strategies need to reflect that. Figure 2 summarizes the two approaches.

.png)

In a fully decentralized model, there is no site-based arm generating a parallel data stream. All structured clinical outcomes come from the virtual pathway: participant-reported ePROs triggering record pulls, AI-assisted abstraction, and EDC ingestion. One method for evaluating data completeness and accuracy, as described in Part 1, is a pre-launch validation pilot: running the abstraction pipeline on a representative sample of records to establish field-level accuracy benchmarks before the study scales. The pilot should produce field-specific concordance rates so that the fields most critical to the primary endpoint (staging, histology, diagnosis date) have individually validated error bounds. The pilot also serves as the tuning phase for the AI inference rules: which facility documentation patterns cause extraction failures, which staging terminology variants need mapping, and where the model needs human override logic. Once live, quality is maintained through continuous AI-human review loops and automated edit checks across every data pull.

In a hybrid model, the site arm serves as a natural quality-control mechanism. Structured EHR extracts (warehouse CSVs or equivalent) from site participants can be compared directly against AI-abstracted PDF records for the same individuals, producing ongoing quantitative concordance data. This comparison is particularly valuable for the fields where discrepancies have the greatest impact on test performance calculations. Early results from active Lindus-managed large-scale diagnostic trials suggest that virtual arm data mining can match or exceed the depth of site-based collection, because the virtual pathway captures records from all facilities where a participant receives care, not only the enrolling site.

For example, in an active Lindus Health trial, pilot testing found that the virtual pathway returned a larger volume of clinical documentation than sites typically upload into the EDC for the same participants. Retrieval partners pull the full record set a facility has on file, including referring provider notes, external imaging, and consulting documentation, whereas sites may selectively upload a subset during routine data entry. The trade-off is that higher document volume requires more robust abstraction and review workflows to efficiently extract the relevant data fields, but it also means the raw source material is more complete for endpoint adjudication and source data verification.

Large-scale diagnostic trials require integrating data from multiple sources (AI-abstracted records, site EHR exports, imaging data, ePRO responses) into a unified, regulatory-compliant EDC. Incorporating a decentralized or hybrid pathway shouldn't create a parallel data infrastructure that increases monitoring complexity or requires a separate data management workstream.

Sponsors running trials at this scale typically have established EDC systems (Medrio, Medidata, Veeva etc). These systems can be integrated through direct data entry, API connectivity, or intermediate platforms with custom field mapping. At Lindus, our eClinical platform, Citrus™, can be configured as a source system that maps directly onto sponsor EDC data fields, enabling monitoring and data review within either system. On an active large-scale diagnostic trial run by Lindus, API integrations between the medical record retrieval partner and Citrus have been designed and tested, mapping abstraction fields directly to CRFs, eliminating manual data entry. That integration was designed so that structured output from the abstraction pipeline loads directly into sponsor-accessible CRF pages, with source PDFs retained and linked for source data verification. The Citrus™ platform is 21 CFR Part 11 compliant with a full audit trail, giving CRAs and medical monitors a single surface to review data, track protocol deviations, and report study alerts across both arms.

Staging is among the highest-risk fields in the abstraction pipeline, both because accurately extracting it is complex and because errors in the staging flow directly affect stage-specific performance claims. Each facility formats records differently, staging terminology varies across providers, and records from multiple facilities for the same patient can conflict (Figure 3). A community oncologist may document clinical stage in a progress note using shorthand, the surgical pathology report from a different facility uses formal AJCC notation, and a third facility's radiology report implies a different stage based on imaging findings. The abstraction system must reconcile all three, and the reconciliation rules must match the study's endpoint definitions. A JCO Clinical Cancer Informatics study found that manual abstraction of staging from surgical pathology reports is labor-intensive and error-prone, and that an NLP-based extraction pipeline achieved accuracy above 0.89 for TNM staging across multiple cancer subtypes.

.png)

Multi-layer validation addresses this. AI models extract structured staging data. Clinical data teams compare abstracted output against source PDFs, with a review rate that can be adjusted upward for fields or facility types with higher observed error rates. Edge cases (staging discrepancies between facilities, conflicting clinical vs. pathological stage, mislinked patient histories due to shared names or DOBs) are flagged for review and resolution. For fields with the lowest error tolerance, human abstractors manually key data into the EDC as a high-control fallback.

The abstraction pipeline that performs well on a few hundred records has to maintain accuracy at a volume of tens of thousands. New edge cases surface as volume grows: facilities that scan handwritten notes, labs that use nonstandard reporting formats, and records in which critical data spans multiple documents that must be linked by patient identifiers. Errors compound if they aren't caught systematically.

The evidence supporting hybrid AI-human abstraction workflows, discussed in Part 2, becomes even more important at scale. A 2025 Nature Communications randomised trial found that human-AI teams outperformed human-alone teams on prescreening accuracy for oncology clinical trials. The abstraction model should pair AI for throughput with human review for edge case resolution, and the review rate should be calibrated to the observed error distribution rather than fixed at an arbitrary percentage.

A continuous feedback loop between clinical reviewers and the AI model is designed to improve accuracy over time: edge cases are identified, fed back into the models, and inference rules are refined with each cycle. For utility studies, where the same abstraction process runs annually across the full cohort, this improvement trajectory matters because compounding errors over multiple cycles can degrade the longitudinal dataset on which the entire study is built.

In hybrid designs, site and virtual arms must produce reconcilable data within the same EDC. A known risk is field mapping discrepancies between arms. For example, different coding conventions for cancer type or staging system, which can introduce systematic errors that surface late and are costly to remediate during database lock. A site arm may capture staging using the EHR's native coding structure, while the virtual arm's abstraction pipeline maps staging from free-text records using a different convention. If those conventions aren't harmonized before data starts flowing, the discrepancy can compound across thousands of records.

Catching these early requires running concordance checks against actual mapped EDC data, not just against raw abstraction output. The validation pilot (for DCT) or the initial concordance analysis (for hybrid) should include end-to-end testing through the full mapping and ingestion pathway, confirming that the data, as it appears in the sponsor's EDC, matches the data as it was abstracted from the source PDF.

The retrieval infrastructure described in Part 2 covers the majority of records within standard SLA windows. But at scale, a portion of records will arrive late: facilities with slow release processes, rural providers outside the retrieval partner's in-network footprint, or records that require additional authorization. When a participant's staging data arrives weeks or months after the rest of their record has already been abstracted and loaded, the pipeline needs a defined process for supplementing existing EDC entries without creating duplicate or conflicting records.

On active trials managed by Lindus, this is handled by processing each new record pull for a participant against the existing abstracted data and flagging key clinical events (such as new cancer diagnoses or treatment changes) for human review. Clinical data teams review flagged records before updating the EDC, ensuring that late-arriving staging or treatment data is reconciled with what's already documented rather than overwriting it.

The three operational challenges covered across this series (enrollment velocity in Part 1, long-term data completeness in Part 2, and FDA-grade data quality here in Part 3) are interdependent.

The enrollment model determines the data quality risks. A fully decentralized design may benefit from pre-launch validation to establish baseline accuracy, since there's no site comparator running in parallel. A hybrid design creates a built-in concordance mechanism but demands tighter EDC integration across arms. The retention and triage infrastructure from Part 2 (ePRO engagement, claims cross-referencing, targeted record pulls) determines which records arrive in this pipeline and when. If the triage logic misses a cancer case because a participant disengaged and wasn't flagged for a record pull, the abstraction pipeline never gets the chance to process it. And when the abstraction system identifies a new cancer diagnosis, that finding triggers the follow-up workflows described in Part 2: additional record pulls, treatment documentation, and longitudinal data capture.

For sponsors evaluating operational partners at this scale, the question is whether these three systems work together, under regulatory scrutiny, for years. Lindus has built and validated this pipeline on active large-scale diagnostic trials, and our team has worked on some of the largest diagnostic screening trials in oncology, including studies with tens of thousands of participants and multi-year follow-up periods.

If you're planning or scaling a large screening trial and need confidence that your data pipeline will meet FDA evidence standards from day one, reach out to our team. We can help you design the operational model, validate it before you scale, and integrate it with your existing systems.

This is Part 3 (final) of a three-part series on operational strategies for large-scale liquid biopsy screening trials. Part 1 covers scaling enrollment through decentralized and hybrid models. Part 2 addresses maintaining complete long-term follow-up data across tens of thousands of participants.